Welcome to MentDB Weak Server!

Control all your data with a single programming language: MQL! This project represents 750,000 lines of source code... The server and the editor(client) are open-source (GPLv3).

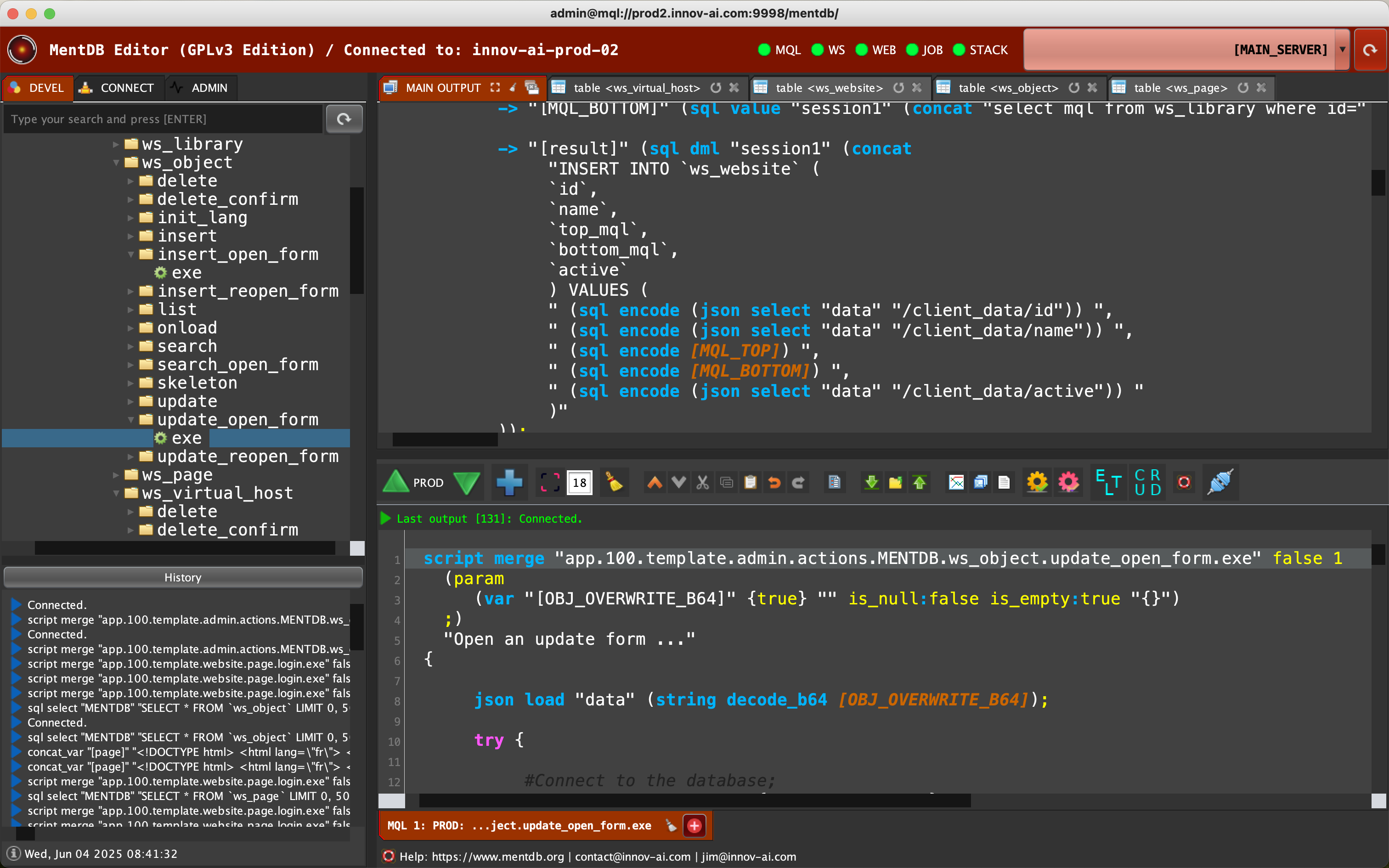

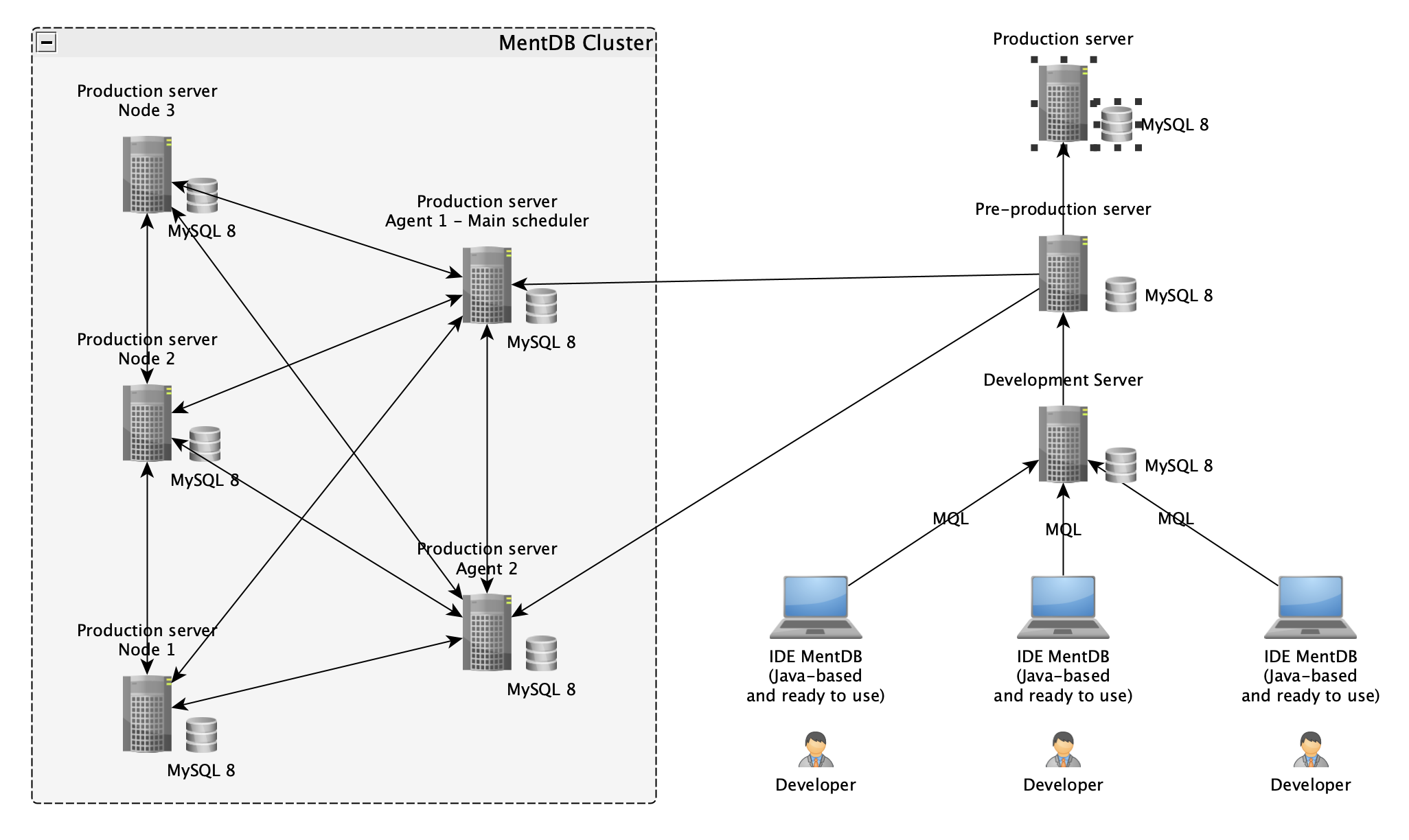

Pilot your MentDB Weak servers in MQL! Automate your business processes with a compressed data language, manage or generate your API in a Service Oriented Architecture (SOA). Perform Extract Transform Load (ETL) in synchronous ou asynchronous processes. Generate Web applications and train your AI model... You can on the same editor have access to several MentDB servers at the same time. All deployment options, MQL source differentiation between server and taking control of the entire architecture are available here!

This project attempt to reach Smart Earth (technically: World Wide Data). A generalized data driver! One programming language to unify everything, making all the data in our world easily accessible, uniformly exchangeable, and transferable with the maximum possible security. Only like this can the great problems of our world be solved... It is open source (GPLv3)!

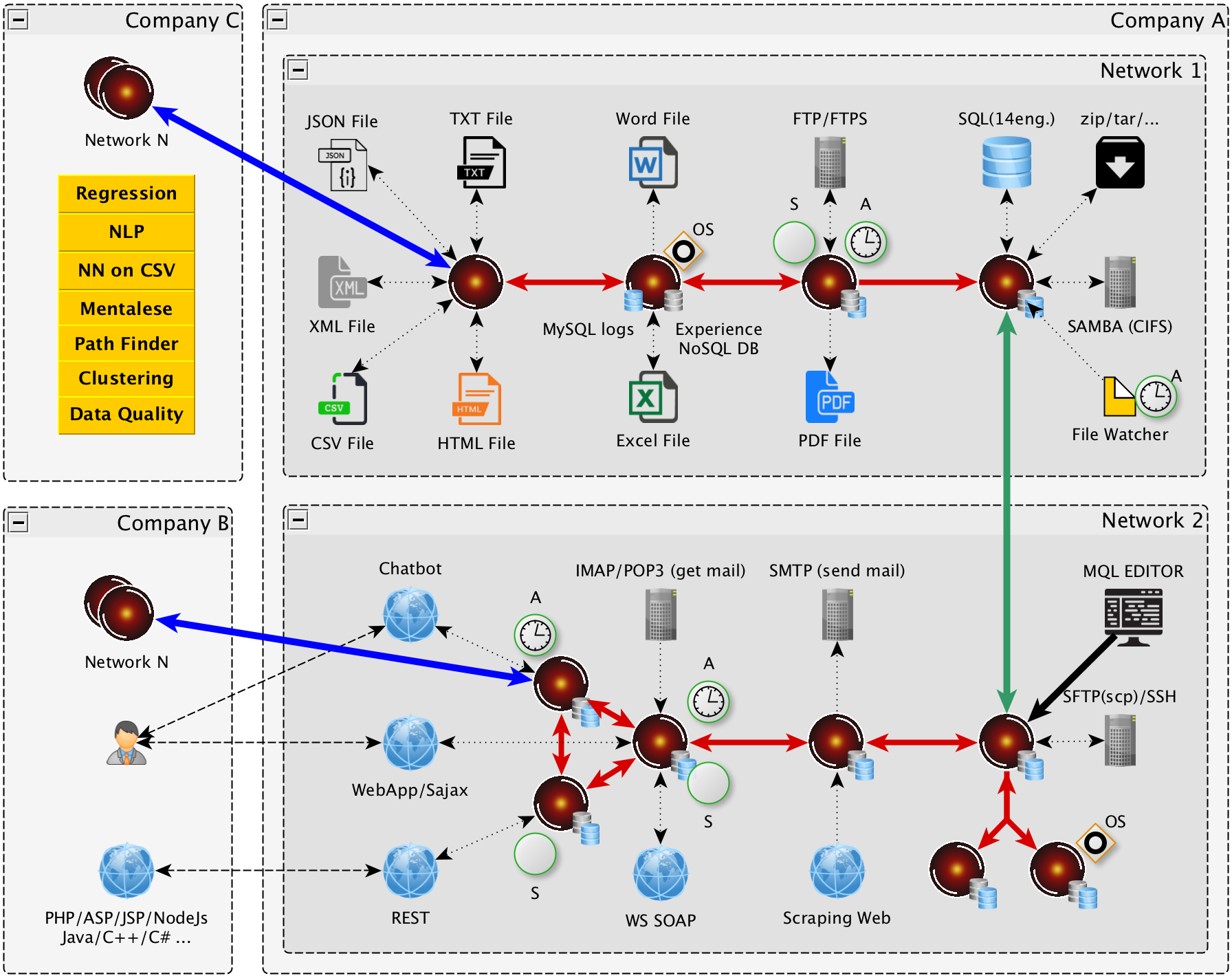

MentDB Weak is a real development platform: ETL + ESB + SOA + WebAPP + AI(Weak) = all in one. You control your company data as if it were a single database, you build applications, and you train prediction machines... Even with different networks or software! The project represents 18 years of R&D.

Pilot your MentDB Weak servers in MQL! Automate your business processes with a compressed data language, manage or generate your API in a Service Oriented Architecture (SOA). Perform Extract Transform Load (ETL) in synchronous ou asynchronous processes. Generate Web applications and train your AI model... You can on the same editor have access to several MentDB servers at the same time. All deployment options, MQL source differentiation between server and taking control of the entire architecture are available here!

This project attempt to reach Smart Earth (technically: World Wide Data). A generalized data driver! One programming language to unify everything, making all the data in our world easily accessible, uniformly exchangeable, and transferable with the maximum possible security. Only like this can the great problems of our world be solved... It is open source (GPLv3)!

MentDB Weak is a real development platform: ETL + ESB + SOA + WebAPP + AI(Weak) = all in one. You control your company data as if it were a single database, you build applications, and you train prediction machines... Even with different networks or software! The project represents 18 years of R&D.

Extract Transform and Load

MentDB Weak allows you to do process of type: Extract Transform and Load (ETL). Automated your data transfers between your software (for example: Excel, text, csv, xml, json or email to your databases). Effectively manage the business logs generated by your processes. Research quickly to find bugs.

Enterprise Service Bus

MentDB Weak also allows you to manage business processes over time. Execute your treatments asynchronously. You send your request in the system, it receives them, returns a processing id, and processes your request when the time is right. Either by a condition (which can come from any MentDB server), or when the processor will be available (number of simultaneous executions to configure).

Service Oriented Architecture

MentDB Weak is a self-secure SOA server. Any process developed with MentDB are automatically REST-Full. You no longer develop web services. All you have to do is give rights. All your applications will be able to communicate with MentDB. Access data from all your networks, securely, as if there was only one. Take control of all your MentDB servers from a single point.

Web Application Framework

MentDB also allows you to create web applications in HTML / CSS, with a new SAJAX technology. No web-service to create, no https security (managed by the user session), AJAX development on the server side. Your applications will be quickly built...

Weak Artificial Intelligence

MentDB Weak has "ready to use" algorithms for practicing "weak" type artificial intelligence. Check your data quality, do analysis, train neural networks and do machine learning. Create chatbot in MQL.

All ETL + ESB + SOA + WebAPP + AI(Weak) functions can be controlled with MentDB Weak Editor...

All ETL + ESB + SOA + WebAPP + AI(Weak) functions can be controlled with MentDB Weak Editor...

Here are some applications developed with MentDB

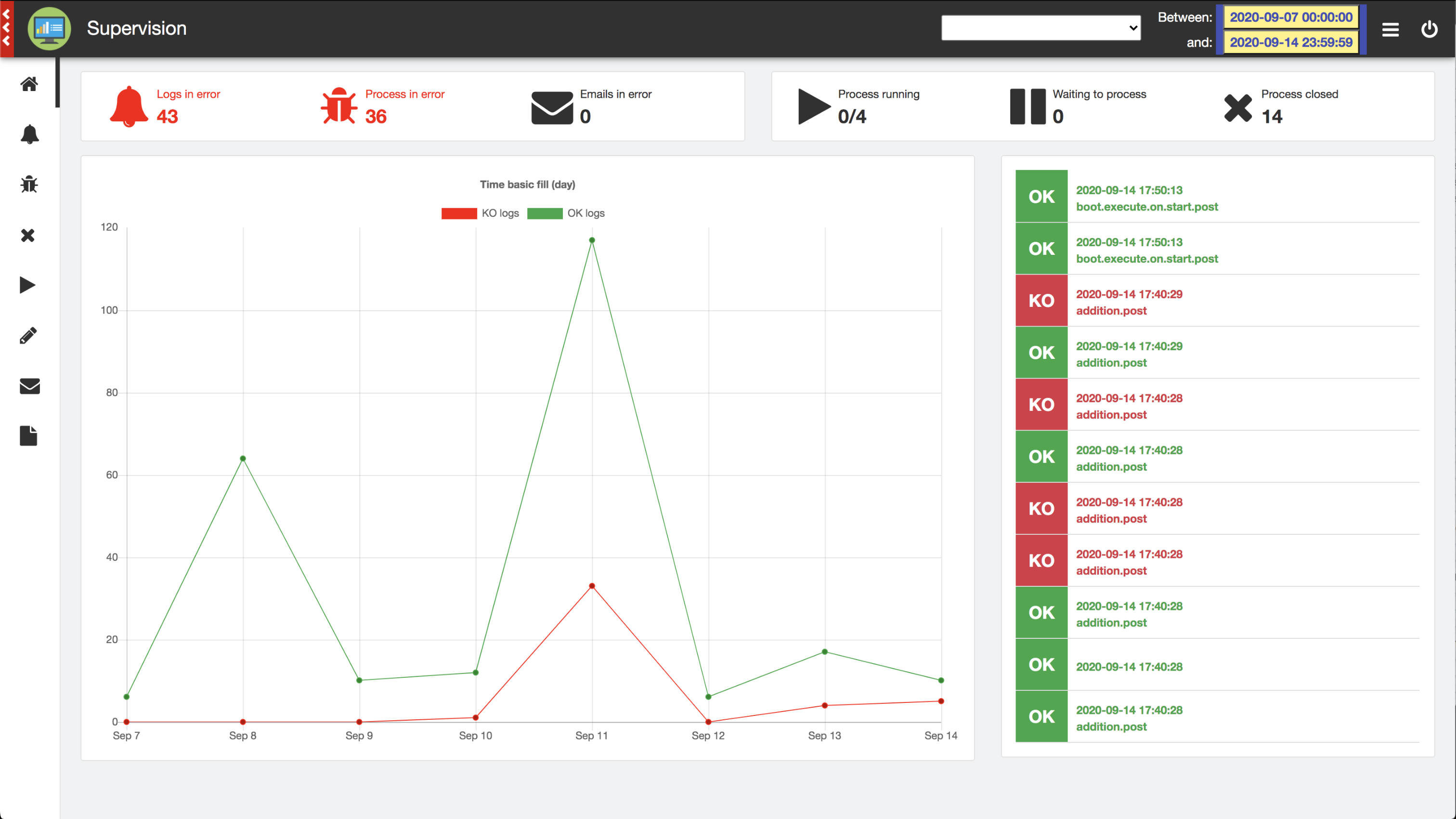

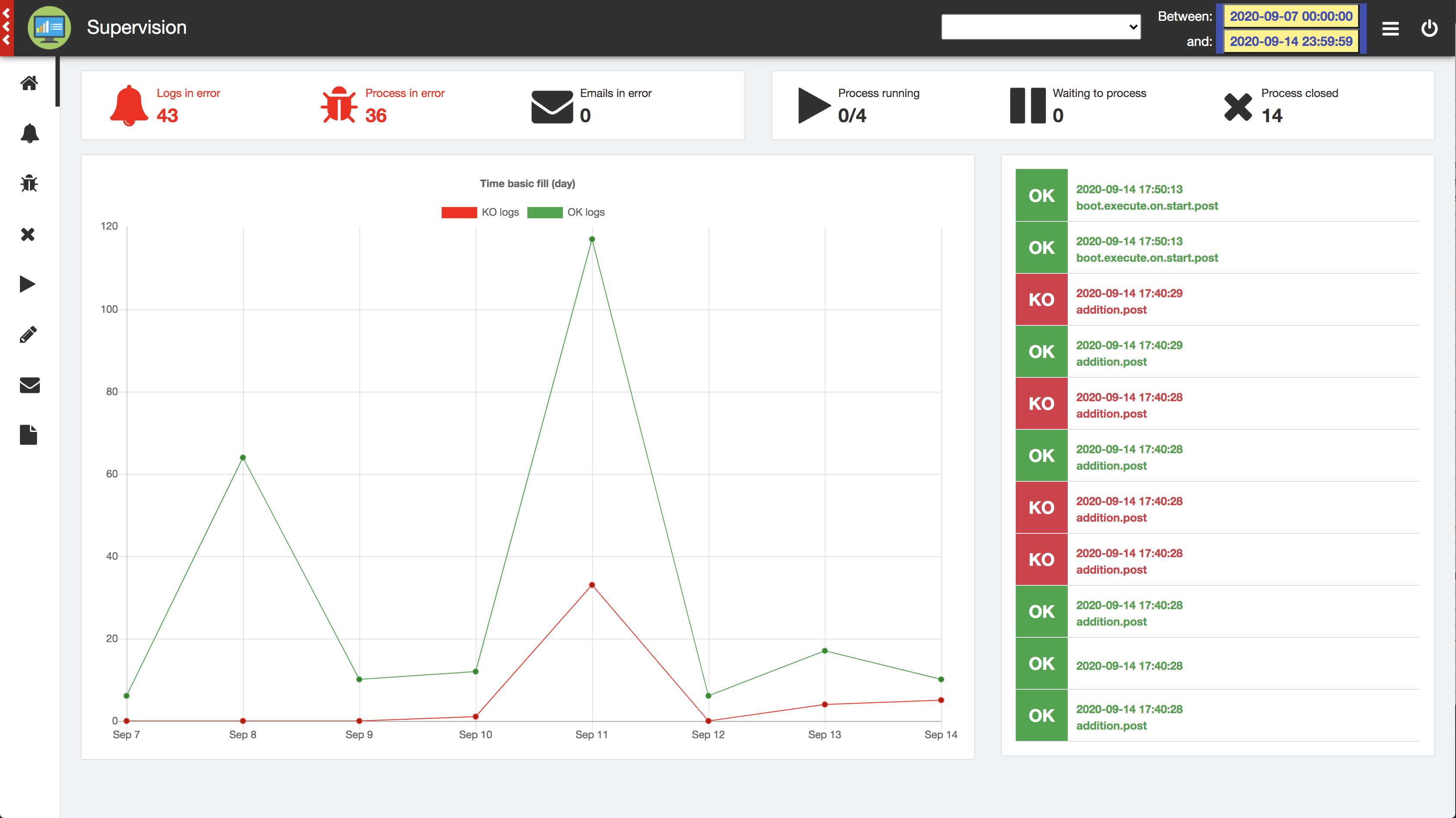

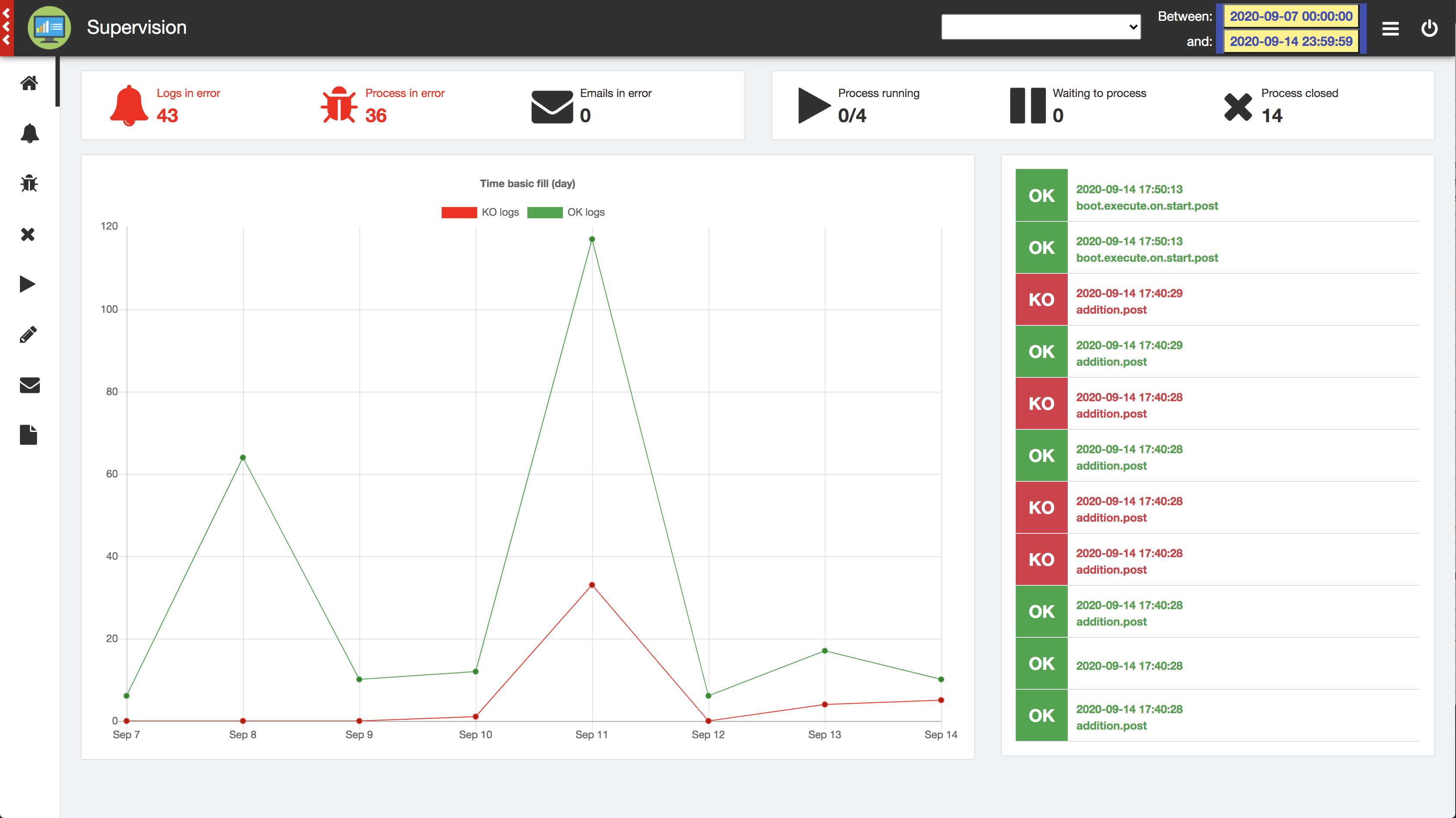

Supervision

This application allows you to monitor your MentDB Weak server, view all running processes and search/understand in recorded logs why one of your business processes is in error. The correction will be quick...

Download MQL Source code...

This application allows you to monitor your MentDB Weak server, view all running processes and search/understand in recorded logs why one of your business processes is in error. The correction will be quick...

Download MQL Source code...

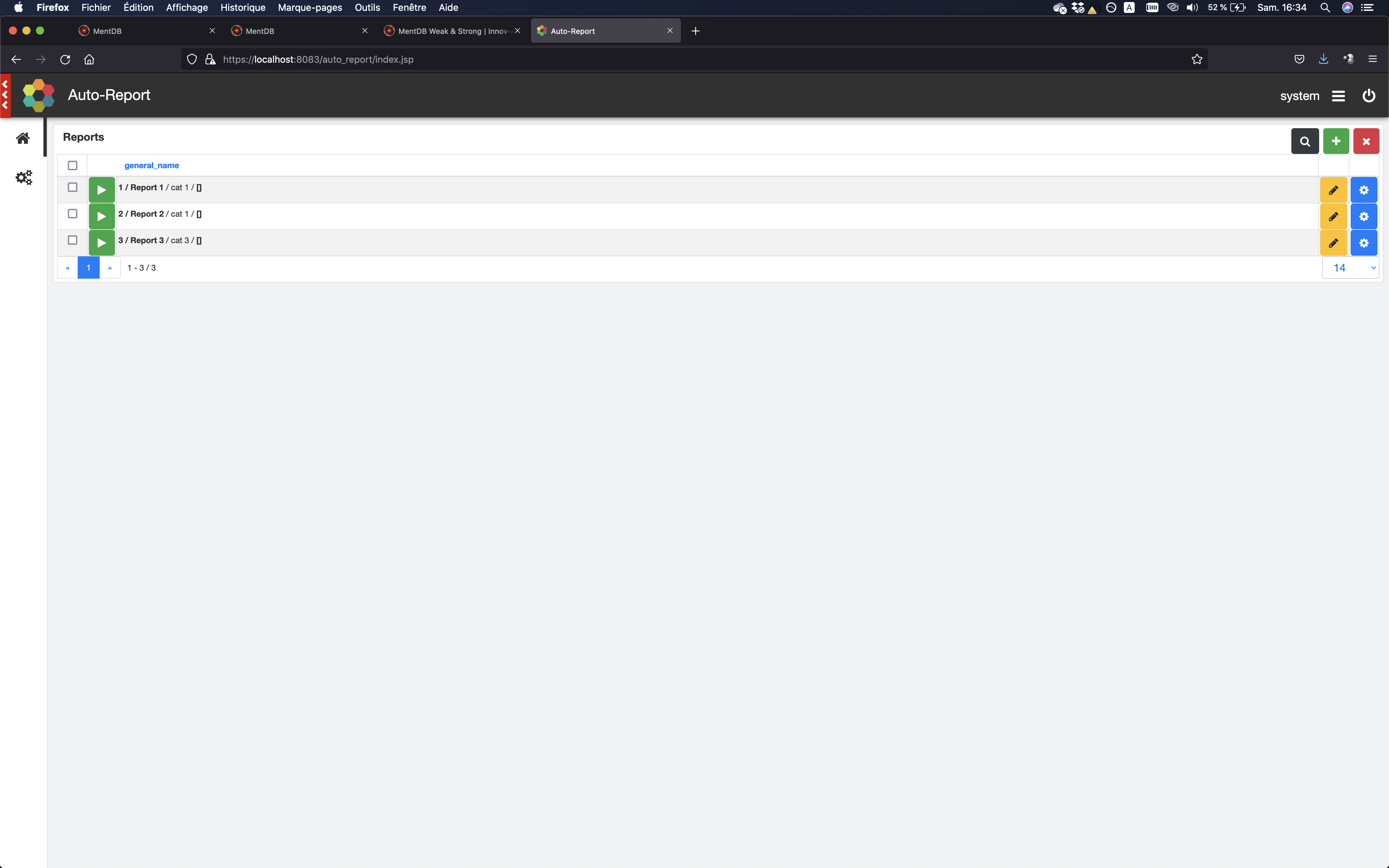

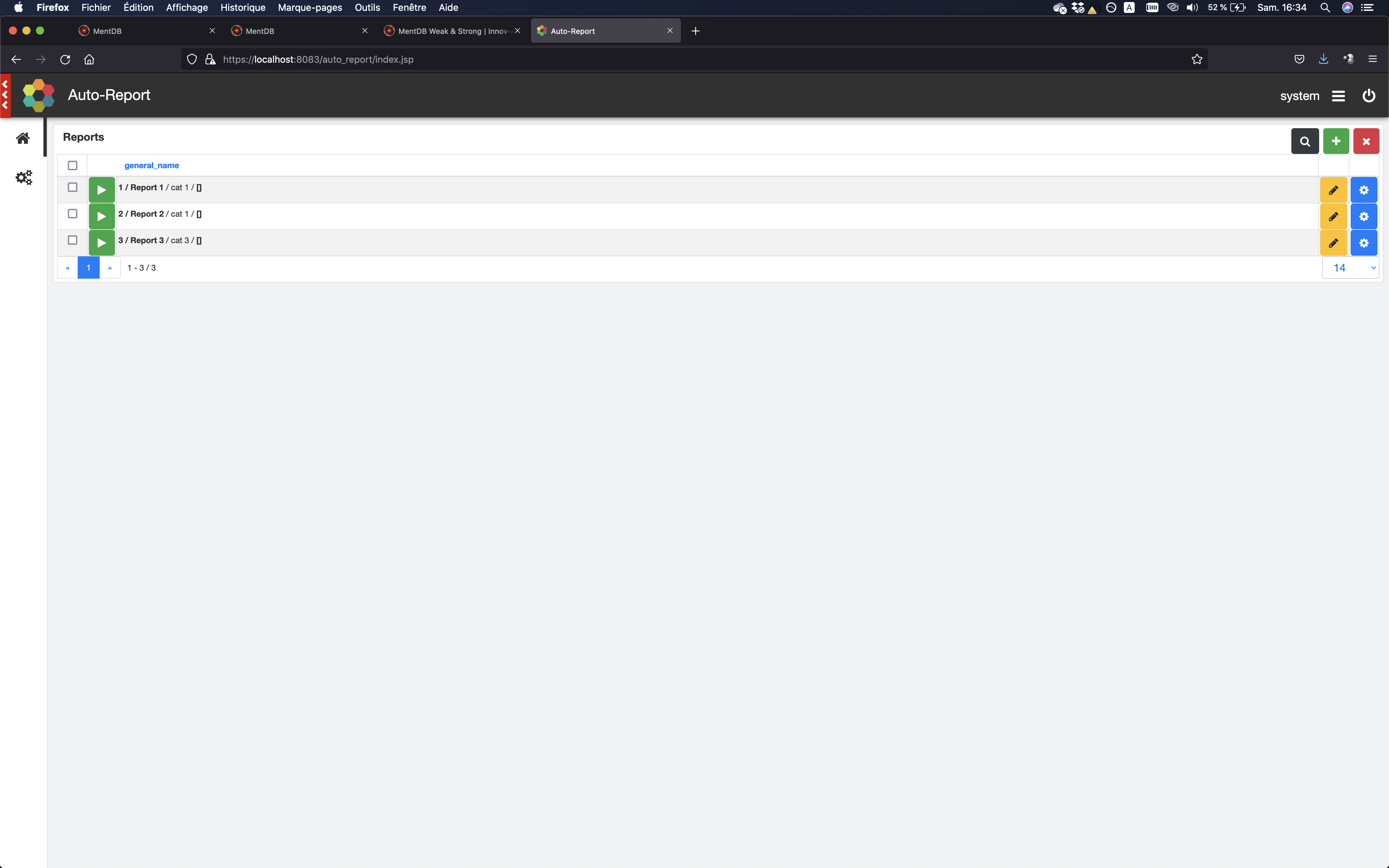

Auto Report

This application allows you to generate and receive reports from your information system by email. You can also give rights to users to download their personalized report directly online. Different output file formats are possible...

Download MQL Source code...

This application allows you to generate and receive reports from your information system by email. You can also give rights to users to download their personalized report directly online. Different output file formats are possible...

Download MQL Source code...

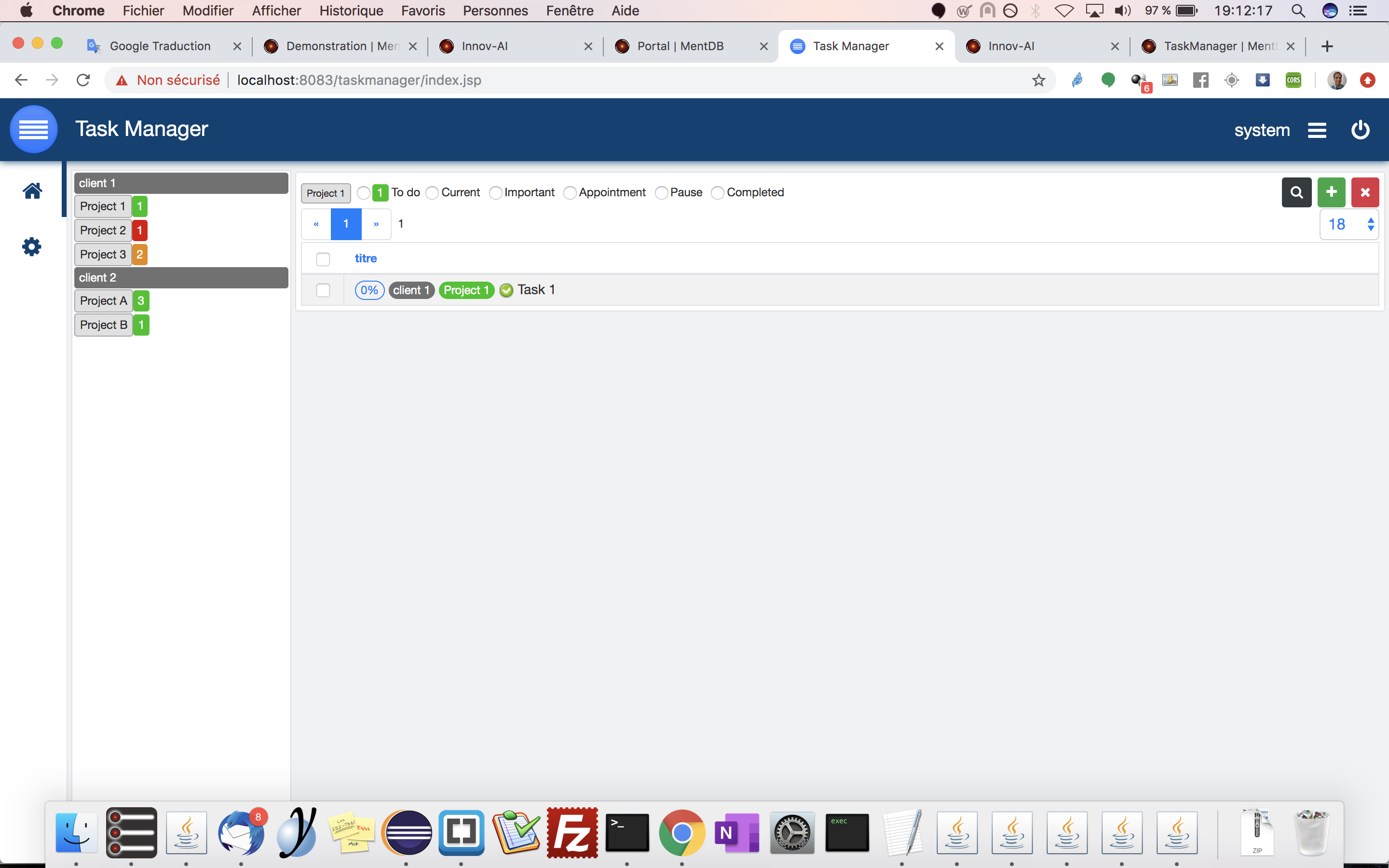

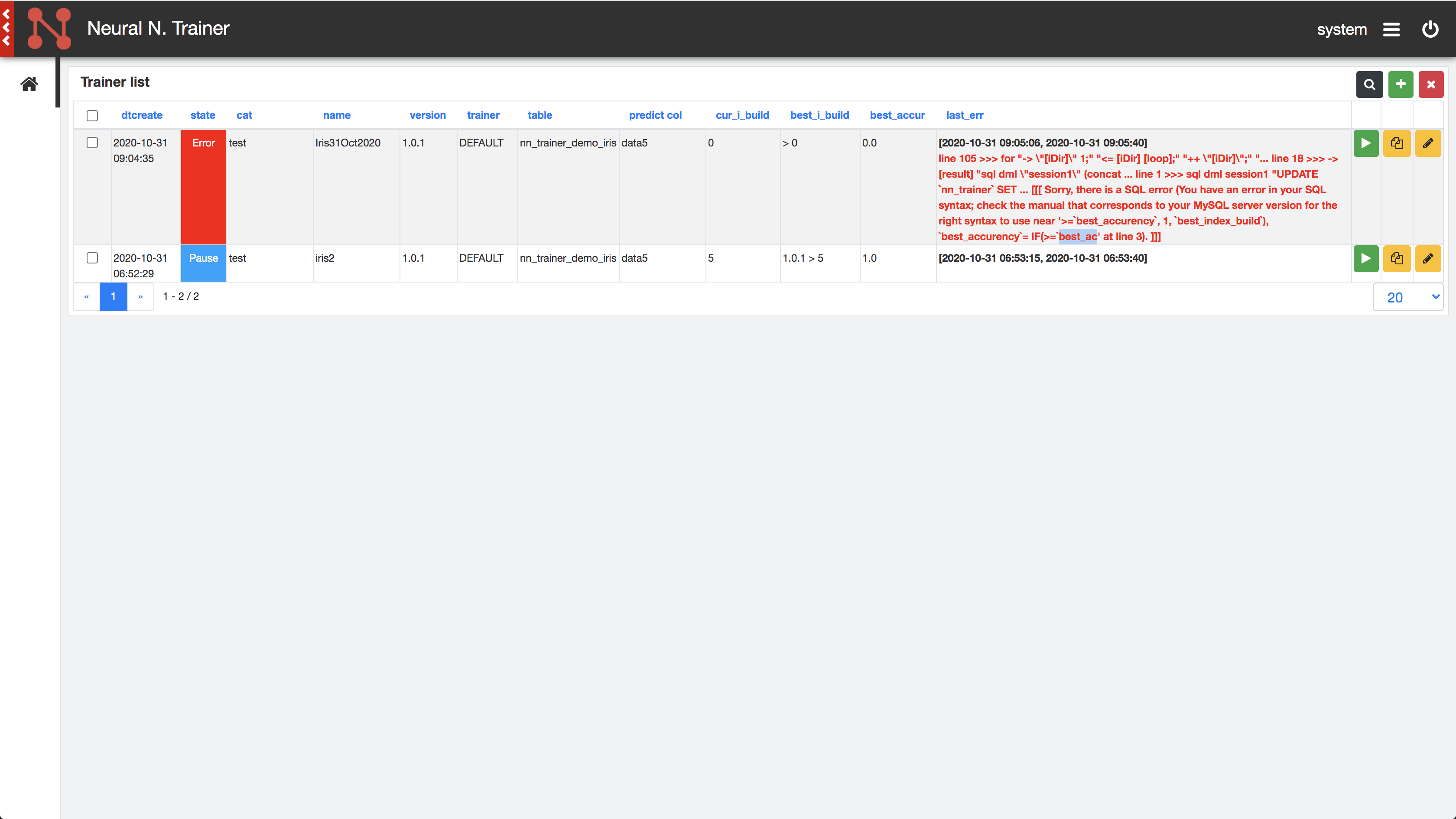

Task Manager

Task managers there are many, but simple and effective, less... You can create clients, groups and tasks according to different states. A good sample application developed with MentDB Weak...

Download MQL Source code...

Task managers there are many, but simple and effective, less... You can create clients, groups and tasks according to different states. A good sample application developed with MentDB Weak...

Download MQL Source code...

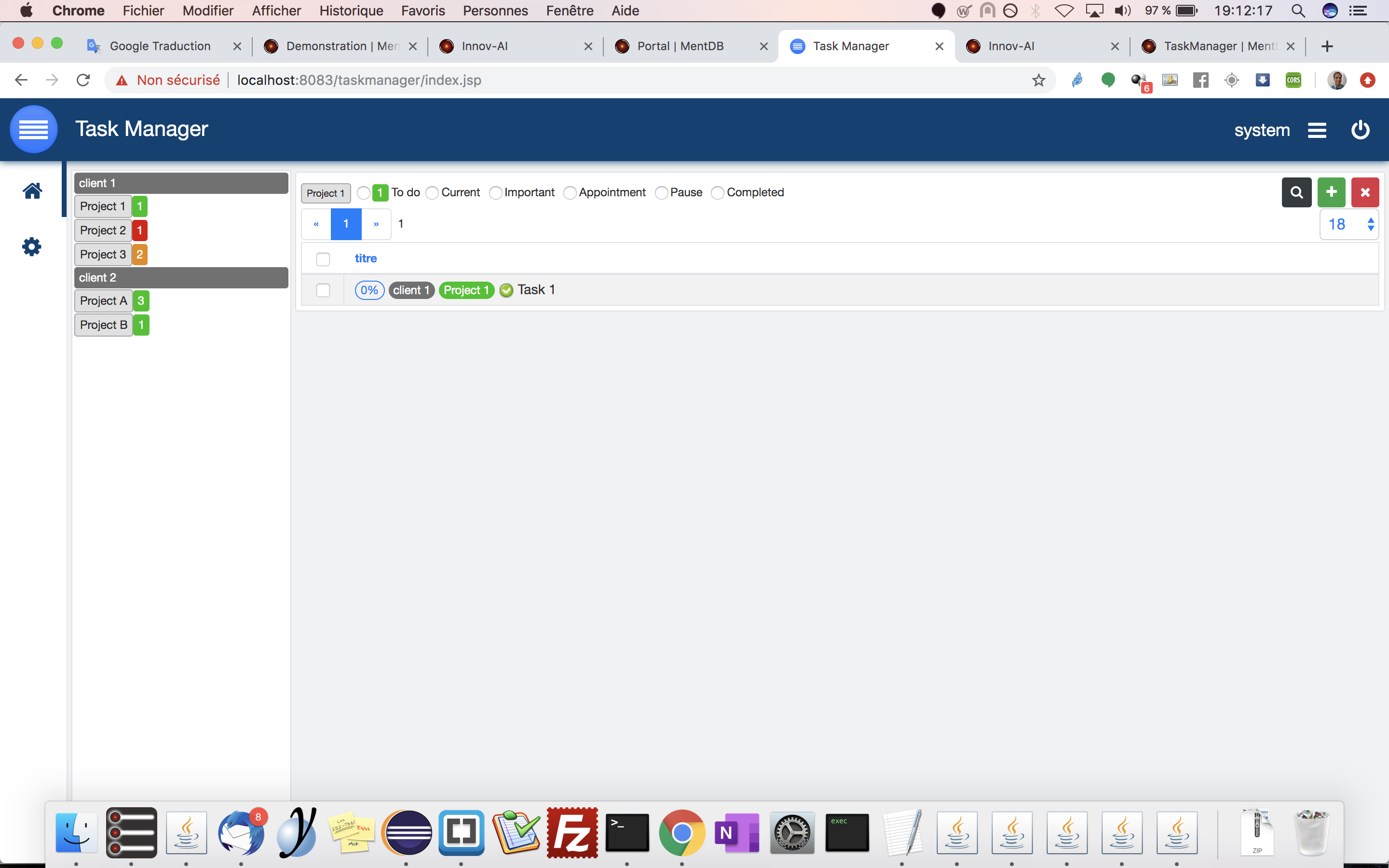

Time Tracker

You spend time on client missions, and you would like to know how much time you spend on each of them? This small application is made for you. Very effective in mobile mode...

Download MQL Source code...

You spend time on client missions, and you would like to know how much time you spend on each of them? This small application is made for you. Very effective in mobile mode...

Download MQL Source code...

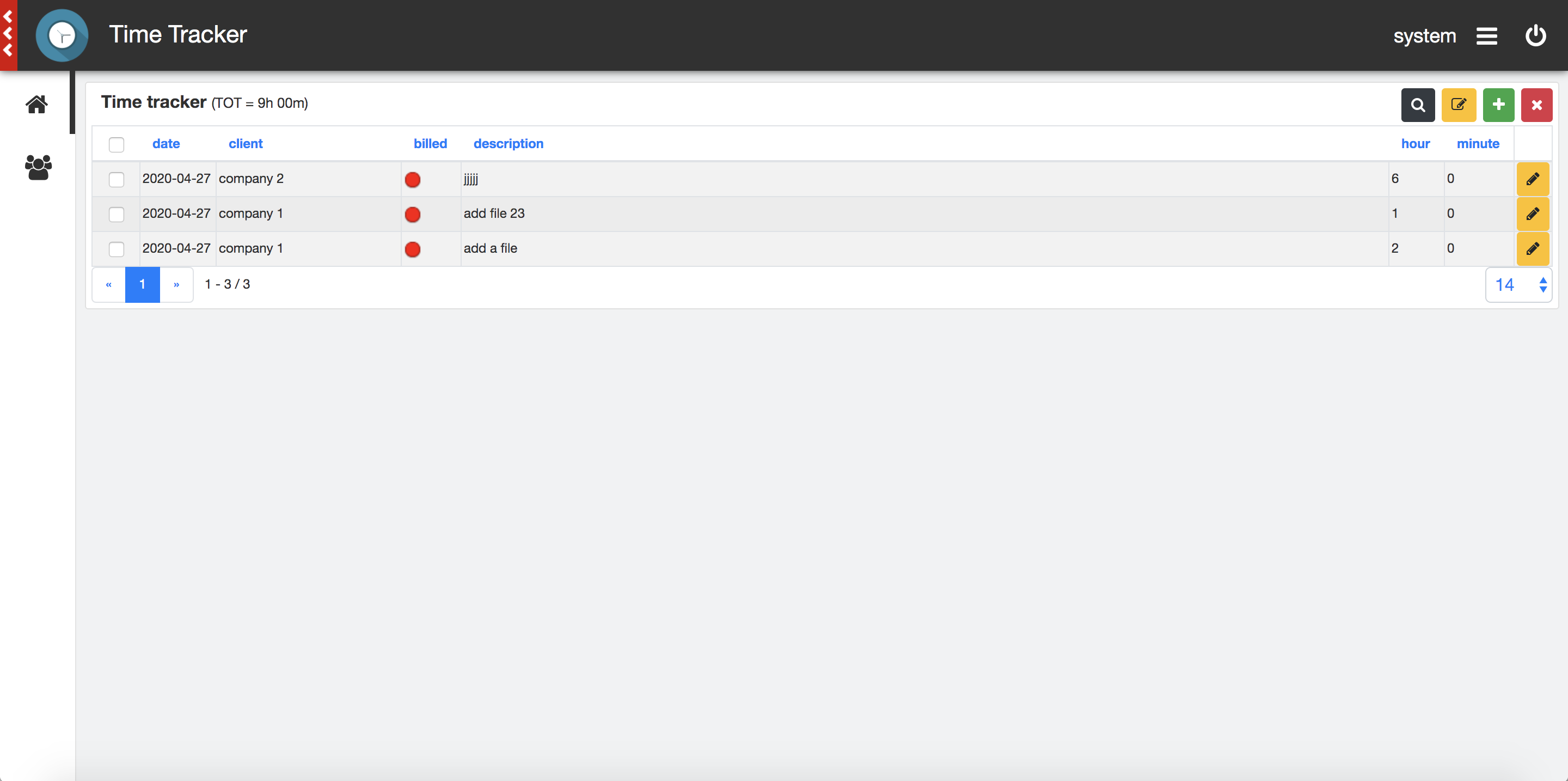

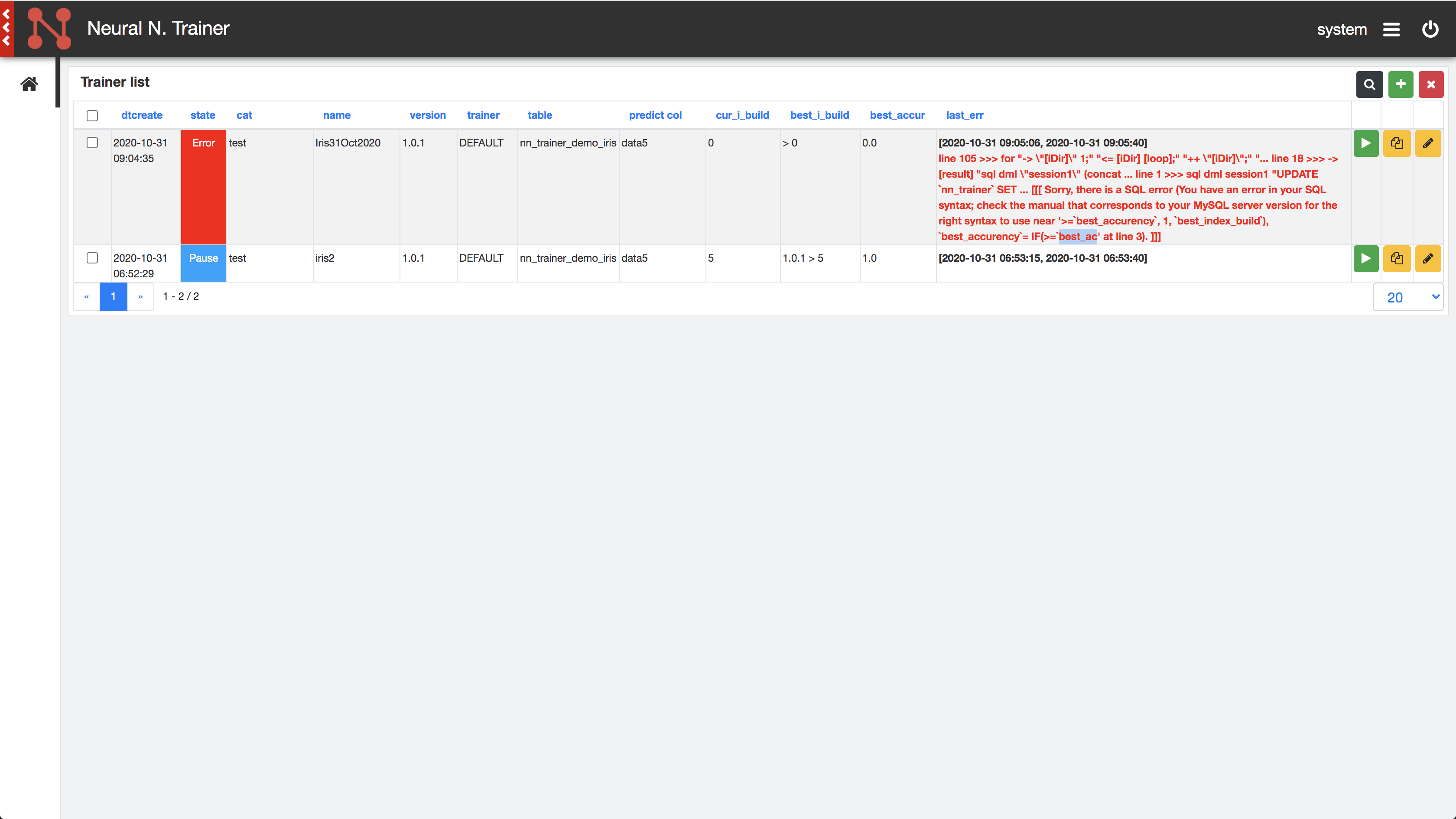

Neural Network Trainer

Train neural networks on CSV type data in the background, this application is very practical for simple neural networks. Feed Forward Processing...

Download MQL Source code...

Train neural networks on CSV type data in the background, this application is very practical for simple neural networks. Feed Forward Processing...

Download MQL Source code...

Concert Manager

Do you have a small music group, do you want to centralize the lyrics and chords of the songs you are going to play? This application is made for you! Lyric printing has been optimized to maximize the use of an A4 sheet...

Download MQL Source code...

Do you have a small music group, do you want to centralize the lyrics and chords of the songs you are going to play? This application is made for you! Lyric printing has been optimized to maximize the use of an A4 sheet...

Download MQL Source code...